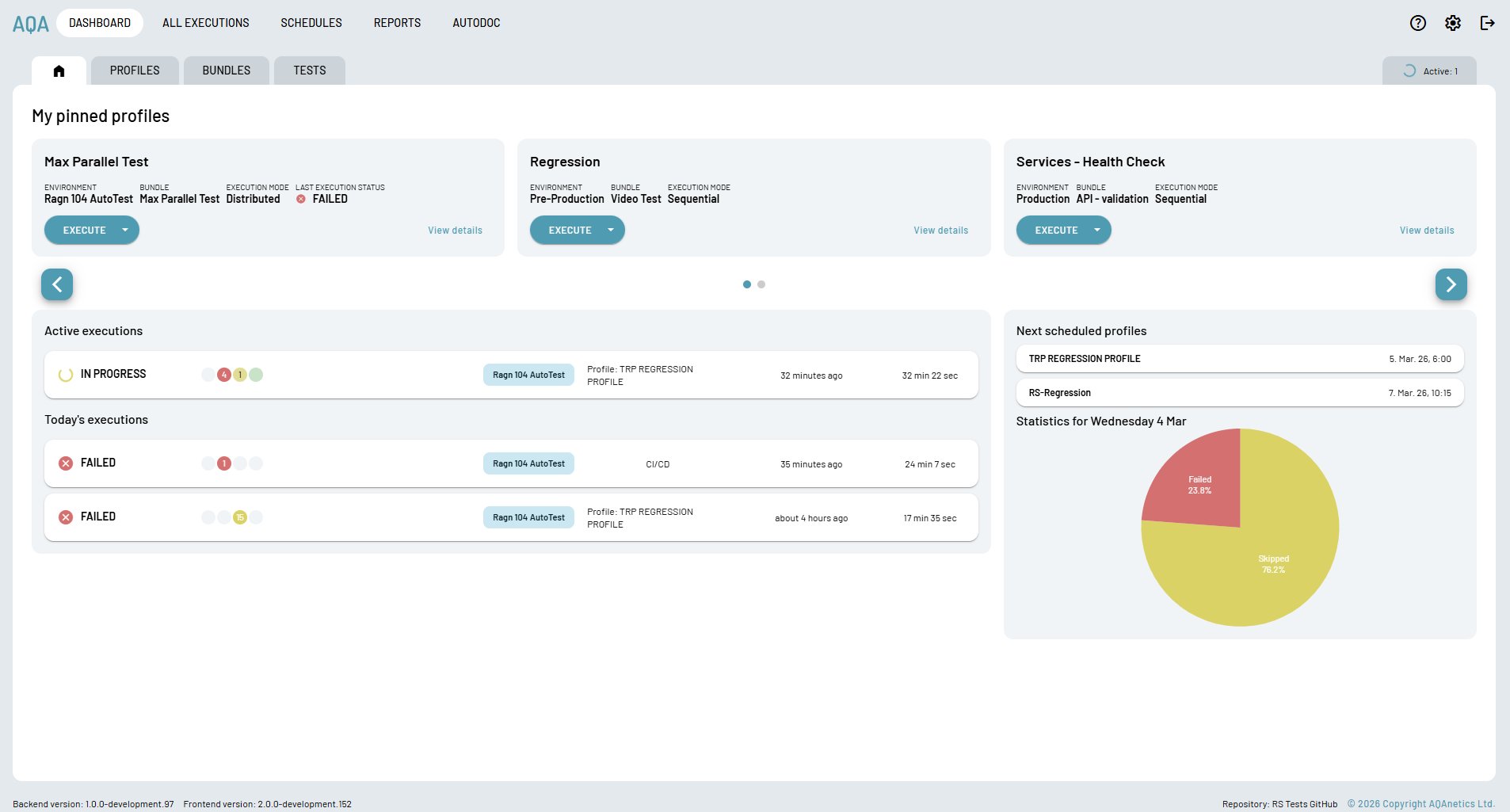

The dashboard surfaces what matters right now — which profiles are executing, what's scheduled next, today's pass/fail ratio, and one-click access to your most-used test profiles.

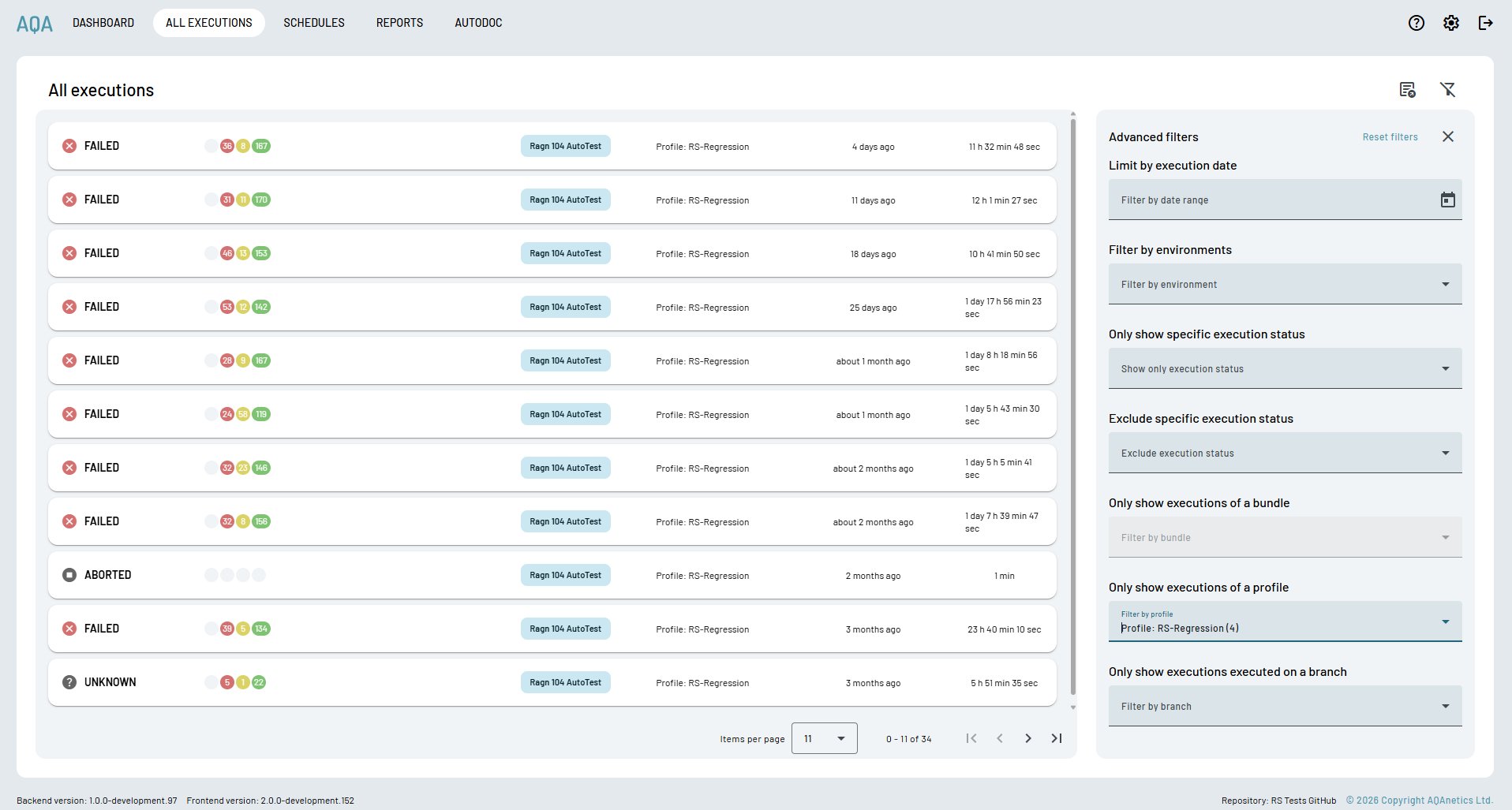

The complete execution history — date, environment, profile, duration, pass/fail/skip counts — stored persistently and filterable by any dimension. Nothing gets lost.

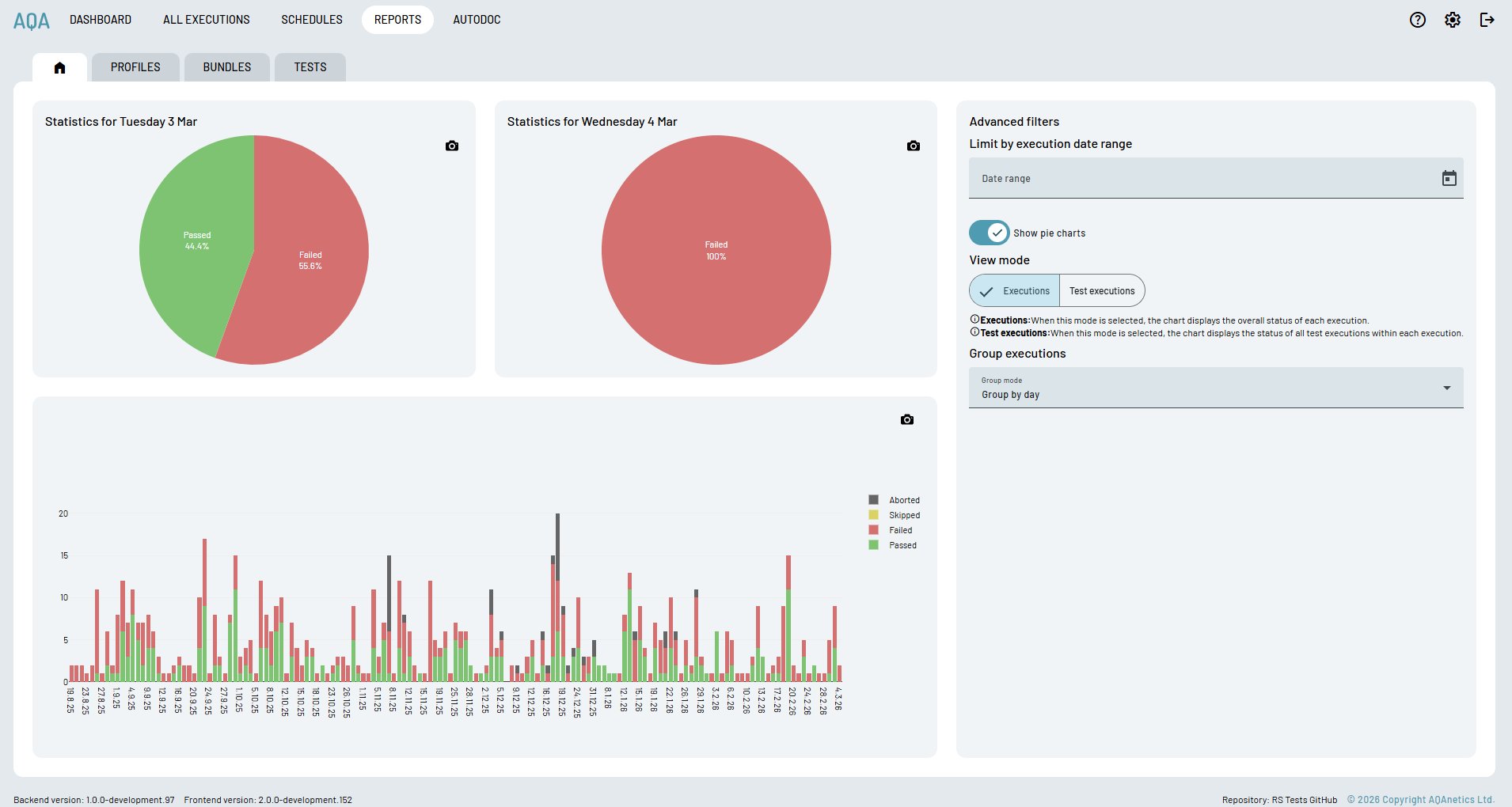

Daily pie charts and a historical bar chart spanning your entire execution history. See at a glance whether quality is improving, degrading, or stable — and share reports with stakeholders who don't need to touch the platform.

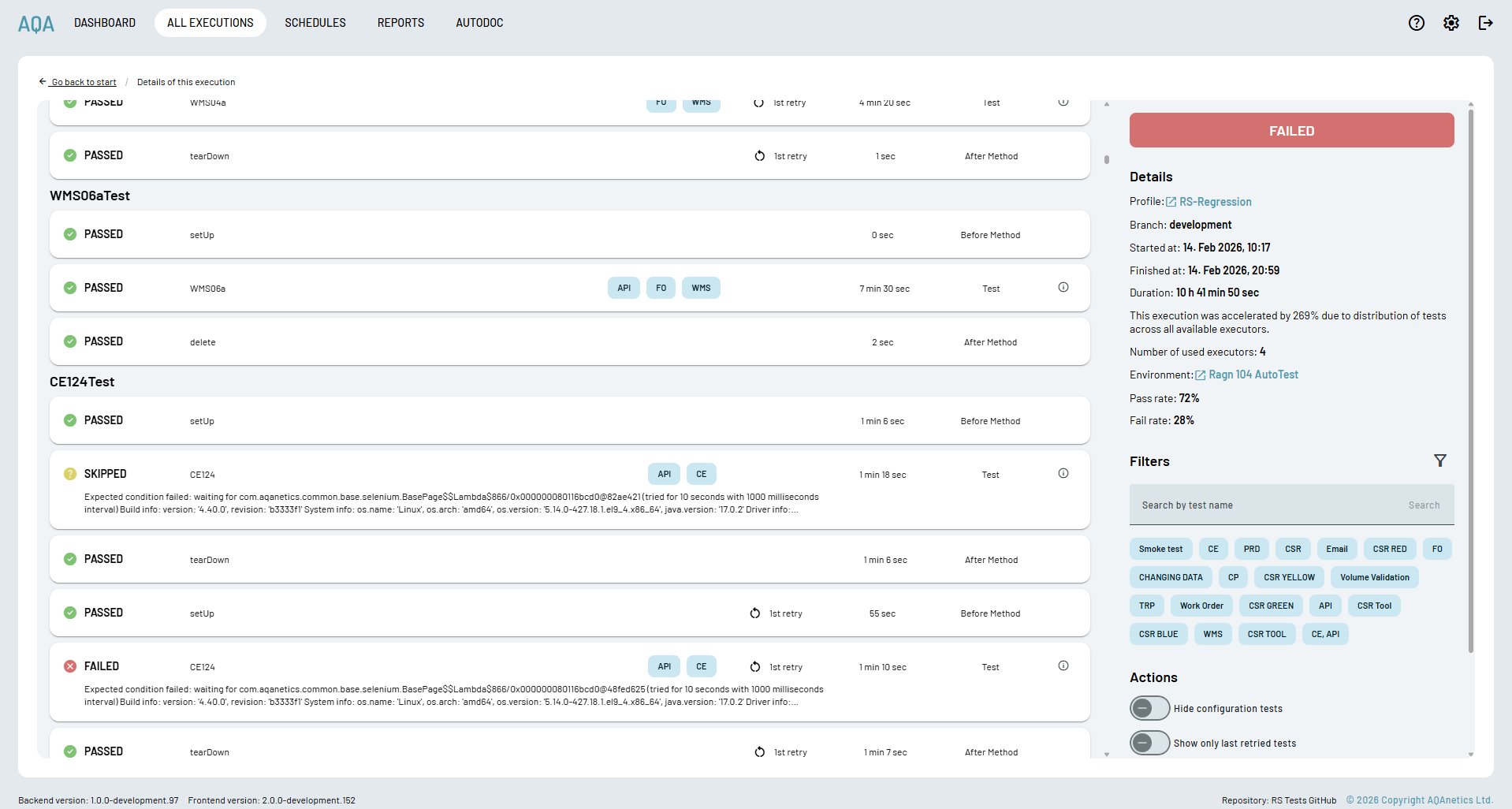

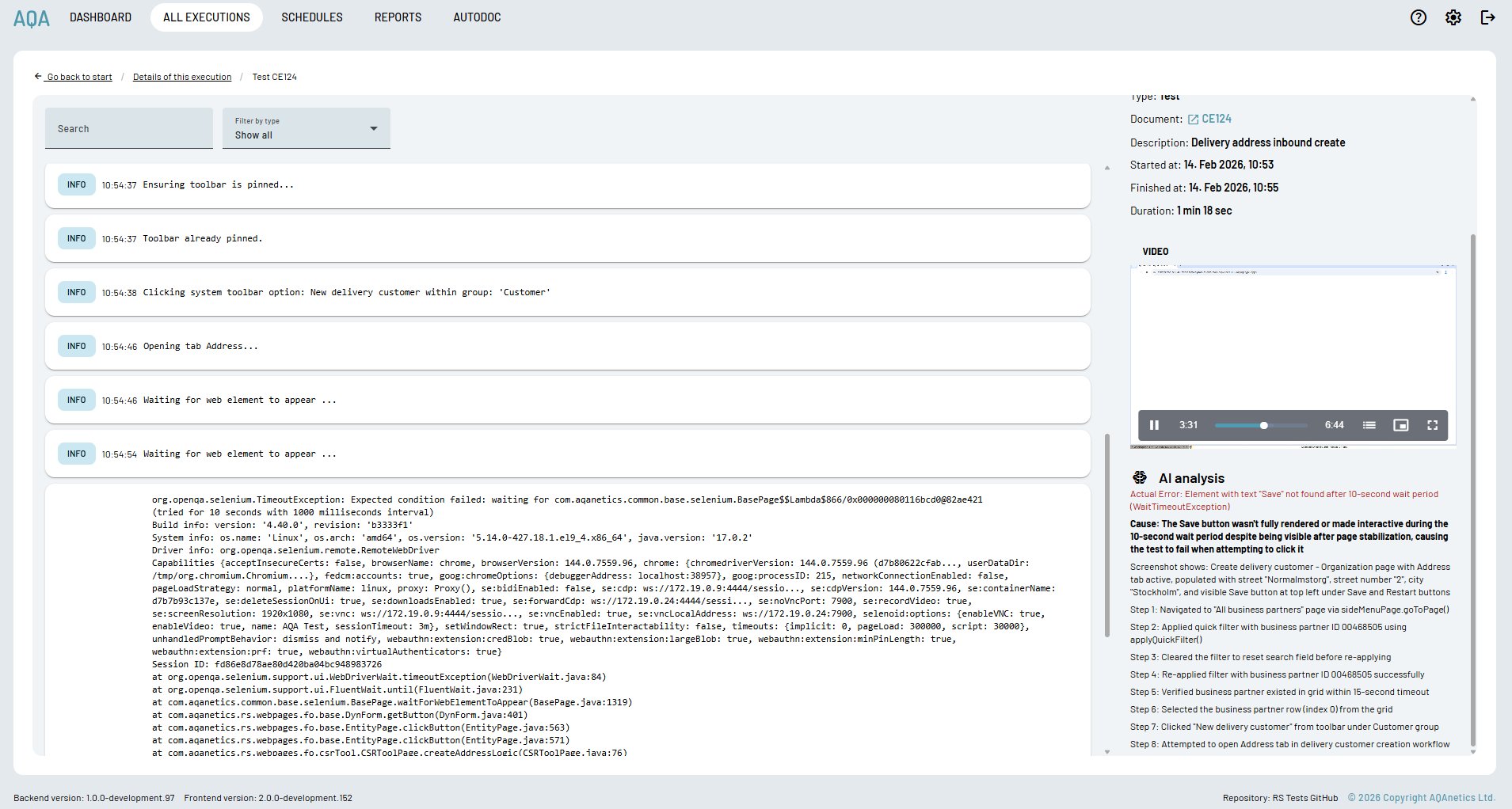

Drill into any run and see each test's full lifecycle — setup, execution, teardown — with per-phase status, duration, retry count, and tag filters. Distributed execution across 4 executors accelerated this run by 269%.

Every failed test gets an AI analysis panel — not just the error, but the cause, what the screenshot shows at the moment of failure, and a step-by-step reconstruction of exactly what the test did before it broke.